Regression

They got what they wanted, and now they're screwing it up.

They got what they wanted, and now they're screwing it up.

"They" in that sentence, is entertainment industry rights holders, who campaigned for years in bad ways and worse ways to get rid of "piracy" - that is, unauthorized copying and digital distribution of their products. In pursuit of that ideal, they sued popular companies (Napster, MP3.com) out of existence; prosecuted users and demanded ISPs' help in doing so; applied digital rights management to everything from software and classic books to tractors and wheelchairs; and pursued national legislation and trade treaties to entrench their business model.

"What they wanted" was people paying for the cultural artefacts they finance. How they got it, in the end, was not through any of the above efforts. Instead, as many scholars and activists told them during those years it would be, the solution was legally authorized services for which people were willing to pay. And thus grew and flourished video services such as Netflix, YouTube, Hulu, and, latterly, Disney, Amazon Prime, Apple TV, and and music services like Apple iTunes, Spotify, and Amazon Music. The industry began making money from digital downloads. So yay?

You would think. Instead, we're going backwards. The reality now is that paid services are becoming a chore to use: users complain the interfaces are frustrating, and that the thing they want to watch is always on some other service. Newspapers now track where to find popular older shows, and know only a sliver of the mass audience will be able to see some of the new material they review.

Result: pirate sites are back on top You can find almost anything in one search, it's yours to watch any way you want within minutes, and any ads have been neatly excised. Like I said: they got what they wanted and then...

This tiny rant had two immediate provocations. The first was the release of Glyn Moody's new book, Walled Culture (available here as a freely downloadable PDF). The other was two Guardian stories by Jim Waterson about Buckingham Palace's wrangle with the UK's national broadcasters over the footage of the recent state funeral of Queen Elizabeth II. The BBC, ITV, and Channel 4 are allowed future use of juar one hour's worth of clips; for anything else they must ask permission.

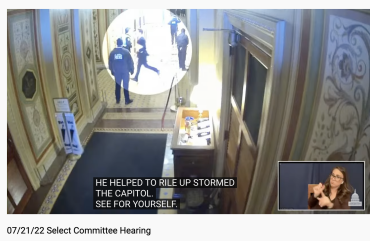

This was a state occasion, paid for by taxpayers, held on public streets and in public buildings, and the video recording was made by broadcasters, which are financed by univeral license fees (BBC) and their own commercial activities (all of them). It's particularly bonkers because the entirety of the day's footage is readily available on torrent sites. The palace literally cannot control the footage as it could at the 1953 coronation - though it can limit broadcast. Waterson also reveals that behind the scenes during the various services palace staff and broadcasters shared a WhatsApp group in which the staffers sent a message every five minutes to approve or refuse the use of the previous video block. In our world of 2022, this power to micromanage how they are seen is more power than most people think the monarchy has. The palace is also claiming the right to veto the use of footage of the new monarch's ascension service. This is the rawest form of copyright as entrenched power.

In Walled Culture, Moody recounts the Internet's three decades of copyright wrangles, and the resulting shrinkage of public access to culture. It's a great romp through a legal regime that, as Jessica Litman said circa 1998, people would reject if they understood it. Moody begins with the shift from analogue to digital media, then goes through the lawsuits, the battle to make the results of publicly funded research open to the public, web blocking and other censorship, the EU's copyright directive, and the regulatory capture that, as Moody says, leaves impoverished the artists and creators copyright law was originally designed to benefit.

My favorite chapter, however, is the one on copyright absurdities. Half of the commercial movies ever made are unavailable to view. Because of the way streaming is licensed, Netflix 2022 has a library perhaps a tenth the size of Netflix 2012 - or 2002, when the rental service's copy of a DVD could not be withdrawn. Yet digital media have a notoriously short life before they must be migrated to newer media and formats. Copyright is even why statisticians continue to use suboptimal statistical analysis because in the 1920s Kendall Pearson refused fellow statistician Ronald A. Fisher permission to use his statistical tables.

As Moody shows, the impact of copyright law is widely felt, and its abuse even more so. Bear in mind that the original purpose was to balance the public interest (as opposed to the public's interest) in its own culture against the desirability of encouraging creators and artists to go on creating new works by giving them a relatively brief period of exclusivity in which to exploit their work. For that reason, a world in which piracy is the best option for accessing culture is not a good world. Moody' proposes numerous fixes that roll back the worst elements and change the power imbalance. We do want to pay artists and creators, especially those whose voices have largely gone unheard in the past. Rights holders should not be - ahem - kings.

Illustrations: Queen Elizabeth II's funeral procession (via Wikimedia).

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. Stories about the border wars between cyberspace and real life are posted occasionally during the week at the net.wars Pinboard - or follow on Twitter.

A couple of months on from Amazon's

A couple of months on from Amazon's  This week: short cuts.

This week: short cuts.

In the last few weeks, unlike any other period in the 965 (!) previous weeks of net.wars columns: there were *five* pieces of (relatively) good news in the (relatively) restricted domain of computers, freedom, and privacy.

In the last few weeks, unlike any other period in the 965 (!) previous weeks of net.wars columns: there were *five* pieces of (relatively) good news in the (relatively) restricted domain of computers, freedom, and privacy. -thumb-370x246-964.jpg)