Barbershop quartet

"It's unlikely that we'll have a barbershop quartet singing it out every time," Emma Craddock. She was talking about ways to make it explicit to users when they are handing over data, kind of like the cookie directive on steroids. (You know, the thing that makes messages pop up on every website demanding that you accept cookies to make the site work.) She was speaking at this week's workshop run by the Meaningful Consent project at the University of Southampton. The main question up for consideration: what is meaningful consent, and how do we achieve it?

For a moment, I was entranced by the possibilities. A barbership quartet! I know - or knew - someone who sang in one of those. It's sort of entrancing to imagine him, as a retired engineer, touring around to people's houses to pop up with his buddies, like out of a cake, to sing out,

"You're paying with your data For this thing you think is free."

It's easy to become inured to clicking "OK" to make these trades just to get on with things; but how much harder to ignore four guys in striped jackets and hats singing full-voice in harmony, arms outflung, two feet from your ears? Yes, yes, in real life it would be spectacularly annoying and wildly labor-intensive (although: jobs!), but for a moment, imagine...it would certainly get users' attention as they traded their data away.

Craddock's main point was that the data protection laws reflect the expectation of their mid-1990s time that we always knew when we were disclosing personal information, just as at one time we knew when we crossed the border into a foreign country's legal jurisdiction and now the crossing is invisible. Today, we disclose information unknowingly: it requires an exercise of deliberate thought to see every typed-in search query as a gift from us to GooBingYa, and the data brokers who swap and trade behind the scenes are completely unknown to the millions whose data they keep. You visit Google, not its fully owned subsidiary DoubleClick; only a tiny minority of obsessed privacy advocates visit Axciom or Comscore. Under EU data protection law you have the right to file subject access request for your data file. But who would know to ask these hidden third-party data brokers - and even if you do, you are not their customer. Use the source, Luke.

Cut to: Motherboard, where Brian Merchant lays out what goes on behind the scenes when you search for information on medical conditions. A search for information on diabetes may get you tagged as "diabetes-worried". US health insurers certainly would want to know if a prospective customer might be on the verge of developing a chronic, expensive condition - and prospective employers might like to know, too. Is this what you "consented" to when you typed in your search term and hit ENTER?

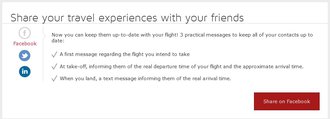

On Tuesday evening I checked in for a flight on Iberia. At completion of check-in, a message popped up, offering me the great idea of sharing with my friends on Facebook.  It offered three "practical" messages, one announcing my flight number and departure time; another announcing takeoff and expected flight time; a third announcing my arrival. We talk about the "sharing economy" and this ultimate product placement is an aspect of it: advertising seamlessly integrated into activities that would formerly have been entirely separated that it's easy not to notice who benefits from the underlying data flow or that it actually *is* advertising. They can reasonably call it opt-in instead of "insidious propaganda".

It offered three "practical" messages, one announcing my flight number and departure time; another announcing takeoff and expected flight time; a third announcing my arrival. We talk about the "sharing economy" and this ultimate product placement is an aspect of it: advertising seamlessly integrated into activities that would formerly have been entirely separated that it's easy not to notice who benefits from the underlying data flow or that it actually *is* advertising. They can reasonably call it opt-in instead of "insidious propaganda".

The European NGO Alliance for Child Safety Online has been discussing the need for legislation to provide children with clearly understandable information about what they're sharing and with who in simple language. It's an unobjectionable idea except for the recurring problem: how? We don't even know how to do this for adults: hence the Southampton workshop.

We do know some things. We know that asking anyone to read lengthy privacy policies and terms and conditions is a meaningless exercise. First, because people hate it and won't do it, even if you make it a bulleted summary. Second, because without the market power to do more than say yes or no, use the service or don't use the service, it's futile. We cannot bargain, object to, or negotiate these contracts. Even phone apps, which are a bit more explicit about what they're asking for, come on a take-it-or-leave-it basis. Over time, if the app world goes the way desktop software did, there may be fewer alternatives to turn to when you don't like the terms than there are now.

We also know that asking users to participate in a lengthy set-up process to embed their preferences into some kind of dashboard or basic settings does not work. Most people accept the defaults and get on with things. (I am a member of the weird minority who read all customization options at the outset and configure them all.)

We really do need context-based single questions a user can answer. We really do need it made clear where our data goes, how it's shared, and with whom. But most of all, we need real choices. People seem not to care about privacy because they believe they've already lost. That barbershop quartet needs to bring with them the ability to rewrite the contract.

Wendy M. Grossman is the 2013 winner of the Enigma Award. Her Web site has an extensive archive of her books, articles, and music, and an archive of earlier columns in this series. Stories about the border wars between cyberspace and real life are posted occasionally during the week at the net.wars Pinboard - or follow on Twitter.